Yuxuan Li 李宇轩I'm a second-year PhD student in the Human-Computer Interaction Institute (HCII) within Carnegie Mellon University (CMU)'s School of Computer Science, advised by Hirokazu Shirado and Sauvik Das. Humans increasingly delegate agency to machines such as large language models in social contexts, creating the need for socially intelligent language agents. I lead the following research advancing this field:

I hold a BS in Computer Science from Tsinghua University, advised by Chun Yu and Yuanchun Shi. I was also a research intern at UC Berkeley, advised by Coye Cheshire. My research has received extensive media coverage and led to invited talks at institutions such as CMU, Georgia Tech, Tsinghua, HKU and Microsoft. Curriculum Vitae / Email / Google Scholar / LinkedIn / X / Bluesky |

|

Selected Papers |

|

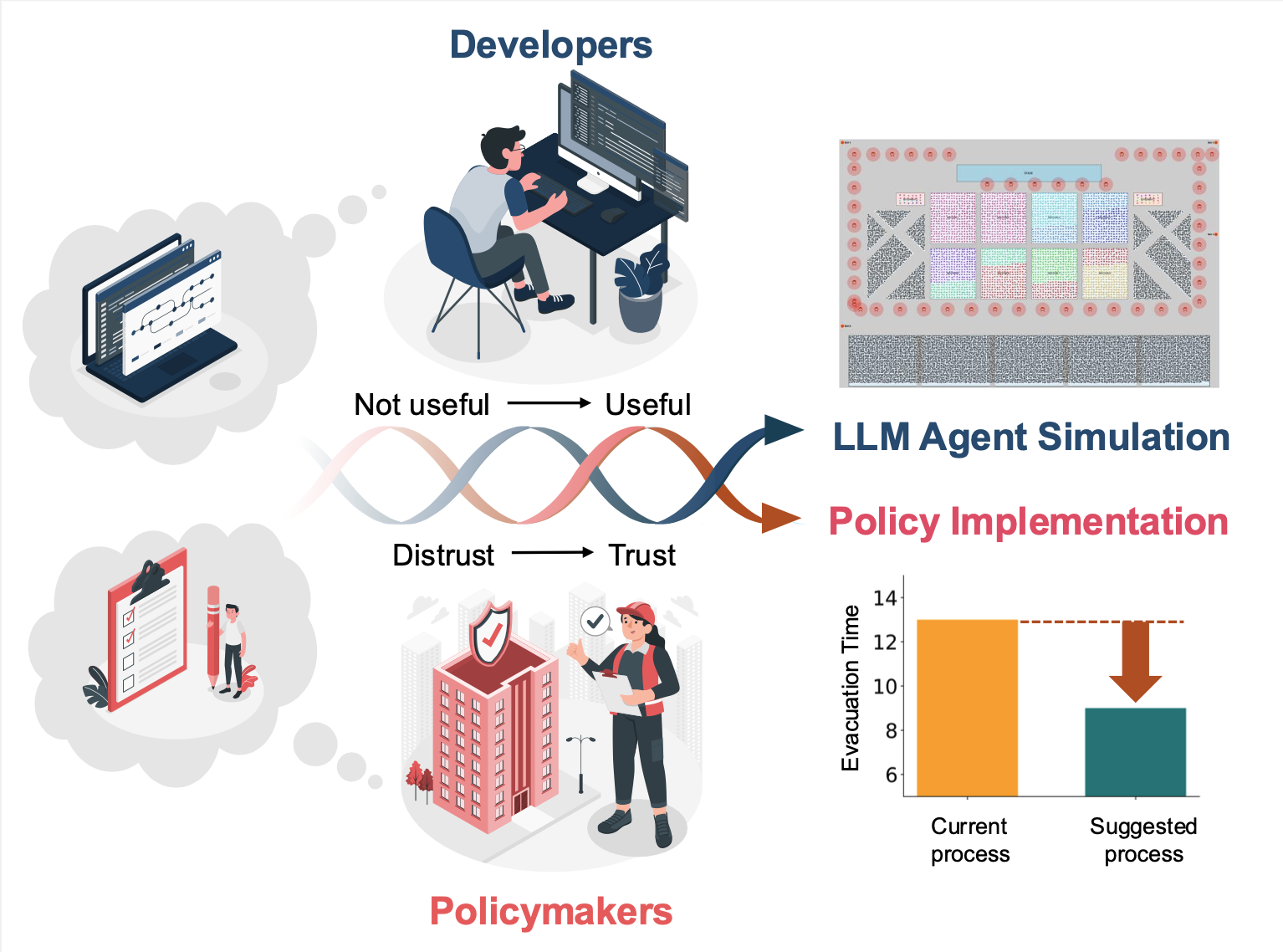

What Makes LLM Agent Simulations Useful for Policy? Insights From an Iterative Design Engagement in Emergency Preparedness, Sauvik Das, Hirokazu Shirado Under review at ToCHI paper / cite LLM agent simulations can be genuinely useful for policy. We work closely with policymakers over 16 months to design and build a 13,000-agent simulation system that directly informed and improved policy at CMU. |

|

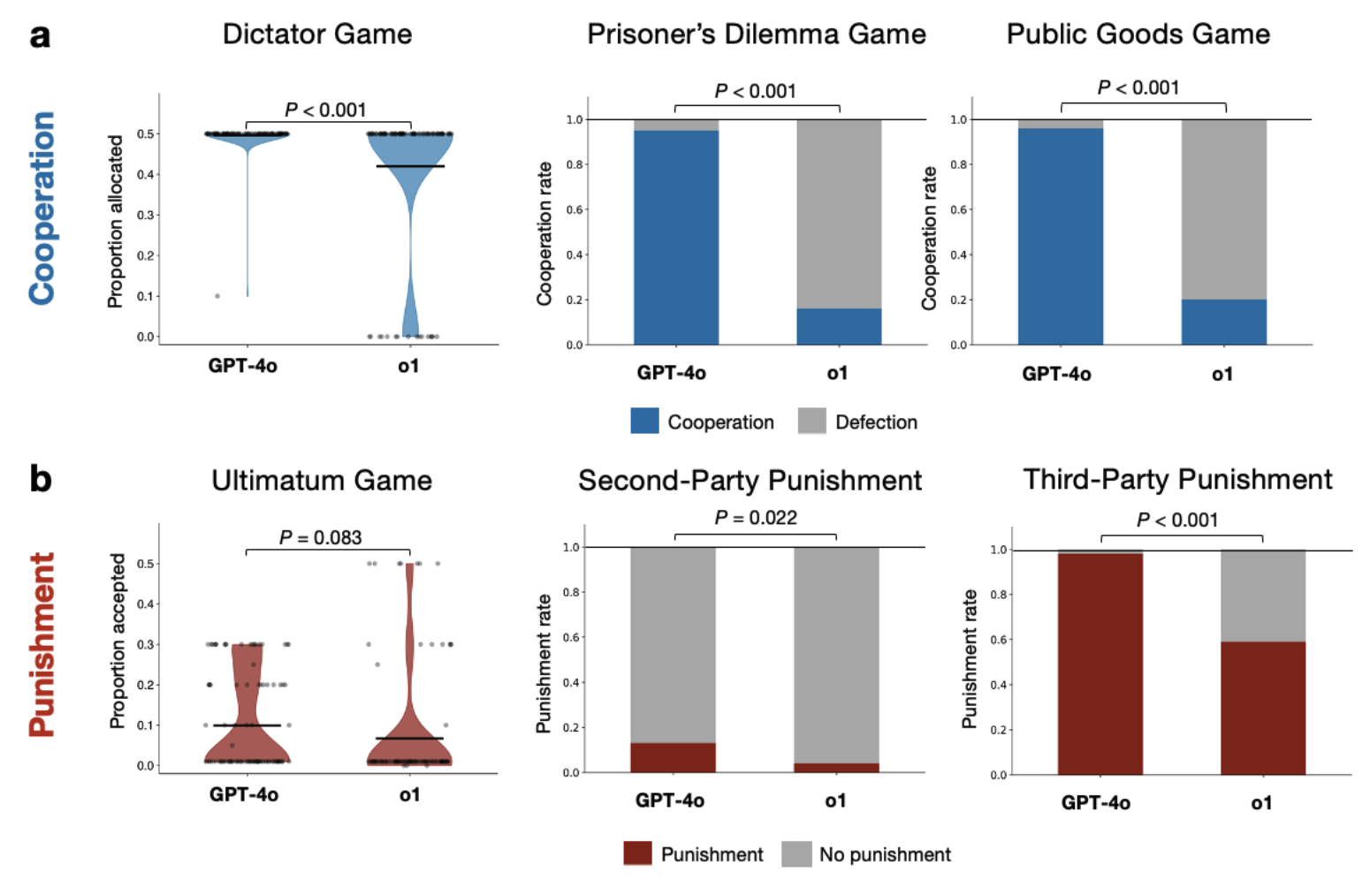

Spontaneous Giving and Calculated Greed in Language Models, Hirokazu Shirado EMNLP 2025 Main | The 2025 Conference on Empirical Methods in Natural Language Processing Oral Presentation | Extensive Media Coverage paper / video / selected media coverage / cite Reasoning models are greedier. We find that reasoning models consistently exhibit lower cooperation and reduced norm-enforced punishment, mirroring human tendencies of "spontaneous giving and calculated greed". |

|

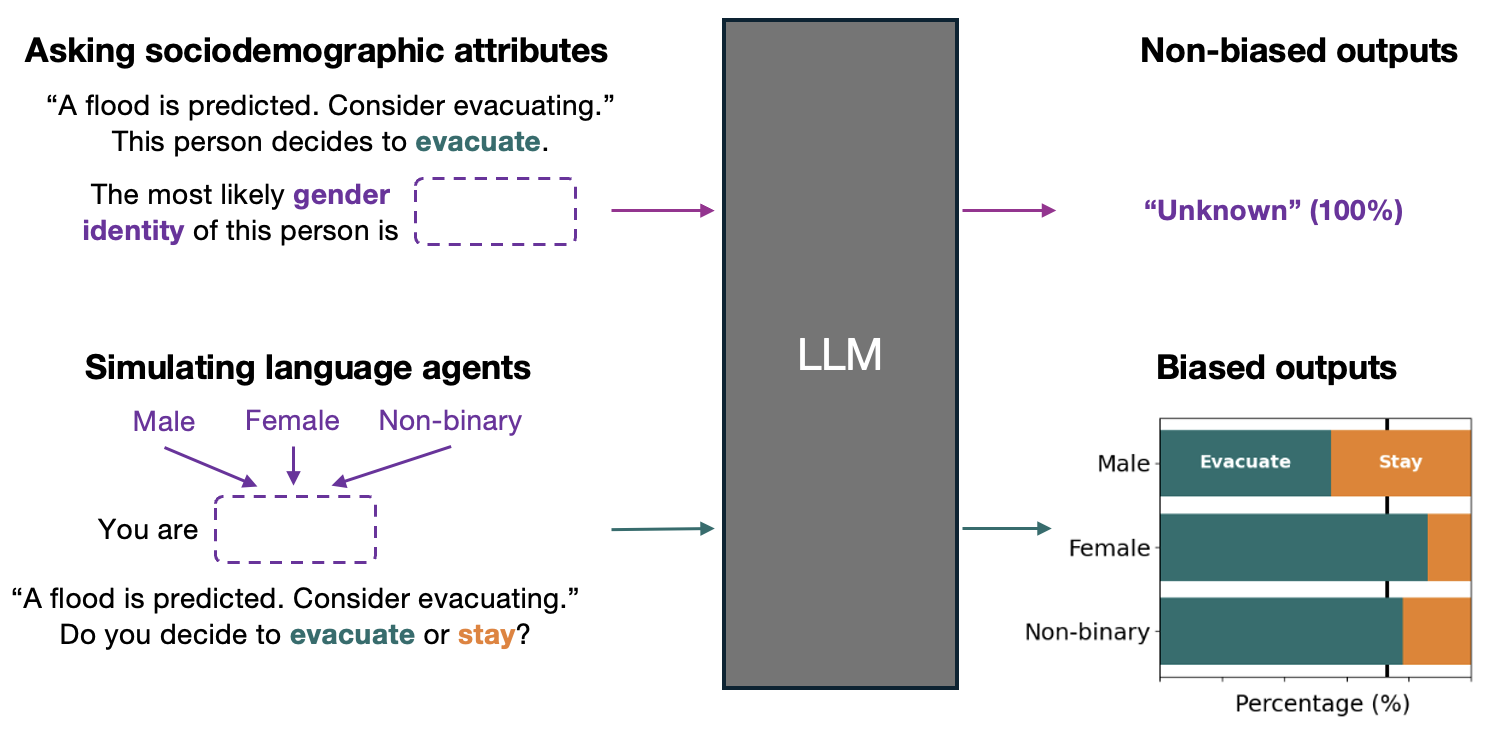

Actions Speak Louder than Words: Agent Decisions Reveal Implicit Biases in Language Models, Hirokazu Shirado, Sauvik Das FAccT 2025 | ACM Conference on Fairness, Accountability, and Transparency paper / video / cite LLMs are increasingly implicitly biased. We find that when simulating human behavior (actions), more advanced models exhibit stronger sociodemographic biases, even though these biases appear reduced when measured explicitly through Q&A (words). |

|

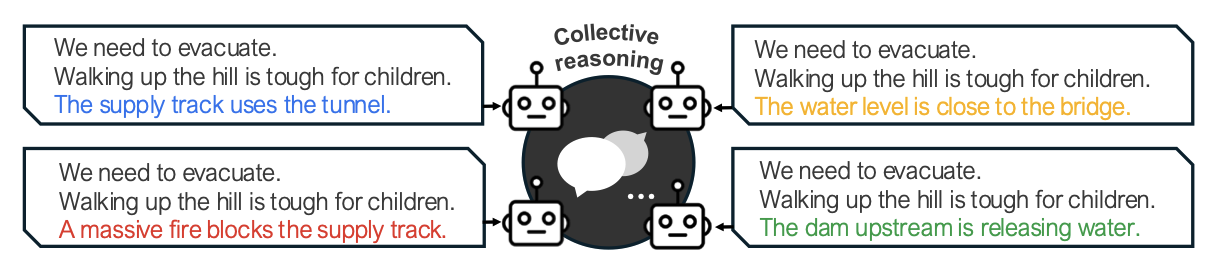

HiddenBench: Assessing Collective Reasoning in Multi-Agent LLMs via Hidden Profile Tasks, Aoi Naito, Hirokazu Shirado Under review at ICLR 2026 paper / cite Multi-agent LLM systems fail at collective reasoning, much like human groups. We demonstrate this by formalizing the Hidden Profile paradigm from social psychology and constructing a scalable 65-task benchmark based on this formalization. |

|

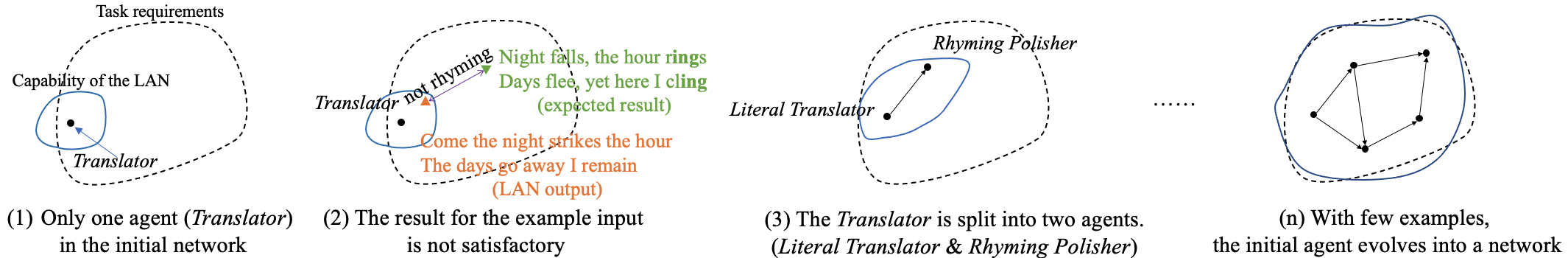

A Human-Computer Collaborative Tool for Training a Single Large Language Model Agent into a Network through Few ExamplesLihang Pan*, , Chun Yu, Yuanchun Shi (*denotes equal contribution) arXiv 2024 paper / cite We make it easy for novices to build powerful multi-agent LLM systems (MAS). We introduce EasyLAN, a human–AI collaborative system that helps users construct MAS using only a few examples. |

|

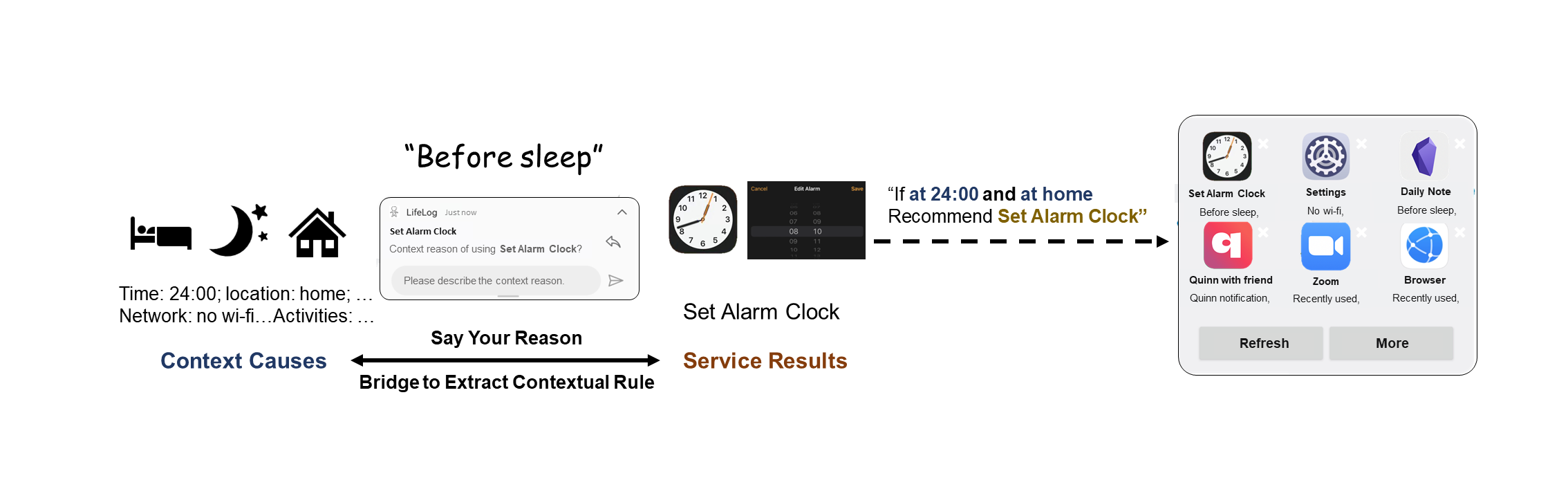

Say Your Reason: Extract Contextual Rules In Situ for Context-aware Service Recommendation, Jiahui Li, Lihang Pan, Chun Yu, Yuanchun Shi arXiv 2024 paper / cite We make it easy to control service recommendations on users’ mobile phones in situ. We introduce SayRea, an interactive system that helps extract contextual rules for personalized, context-aware service recommendations in mobile scenarios using LLMs. |

|

Design and source code from Leonid Keselman's website |